Intro

Have you noticed your site’s traffic fluctuating from time to time?

Google keeps updating its search algorithms, many of these updates expose certain dangerous issues. And often, those issues are technical.

In this article, we’re looking at 13 technical SEO issues that still trip up websites in 2026. These are the kinds of problems that affect how your site gets crawled, indexed, and experienced by users, as well as how it gets interpreted and cited in AI-driven search results. In fact, as search continues to shift toward AI-generated answers, technical SEO plays a major role in making sure your content is actually usable by these systems.

A thorough SEO audit can uncover any hidden problems you might miss at first glance, but before that, you need to understand what you’re dealing with and what you need to do about it.

1. Missing Alt Text on Images

You'll find so many sites missing alt attributes on images, making it one of the most prevalent image SEO issues. According to WebAIM’s study of the top 1 million home pages, ~55% of reviewed pages were missing image alt text. This directly affects accessibility and rankings in image search. And it’s not just an SEO problem. It also boosts accessibility and user experience overall.

Alt text helps screen readers describe images to users who rely on them. It also gives search engines context about what an image represents, which affects visibility in image search, too.

The fix is pretty straightforward. Add clear, descriptive, keyword-relevant (but not keyword-heavy!) text. Keep it an appropriate length. Go for a concise description rather than a full sentence.

2. Broken Links, Redirect Chains, and Status Code Errors

Broken links and redirect issues quietly waste crawl budget and create friction for users.

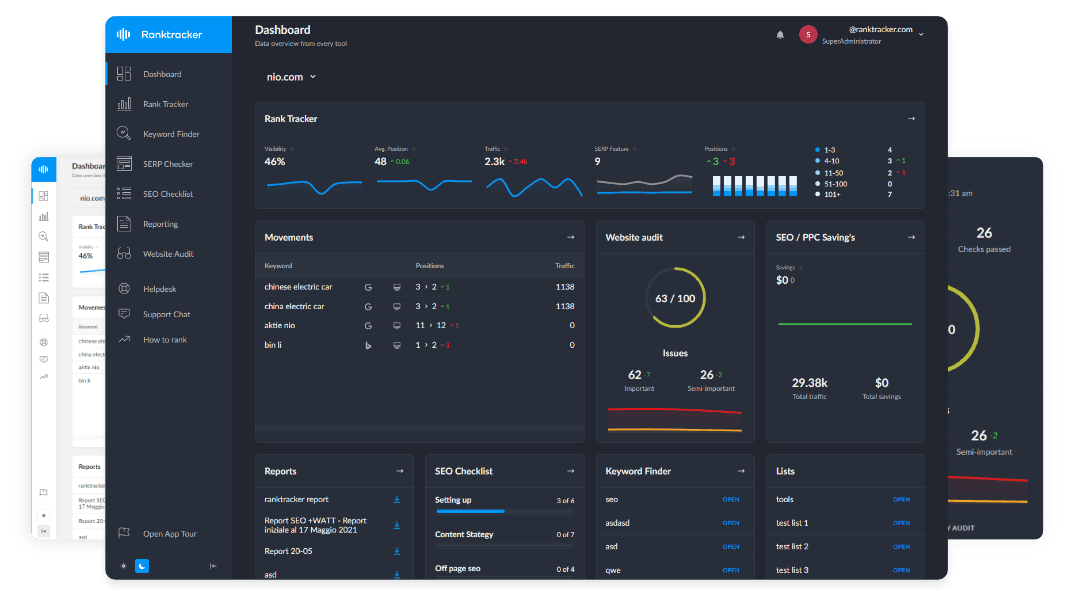

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Crawl budget refers to the number of pages Googlebot can process in a set time. When that budget gets spent on dead ends or unnecessary redirects, important pages might get ignored.

These issues can show up as:

- 4XX errors like 404s

- Soft 404s (pages returning a 200 status but with thin content)

- Wrong or inconsistent status codes

- Broken or endlessly redirecting (aka looping) internal and external links

The fallout from these problems includes frustrating user experience and wasted crawl resources. They can also affect AI search visibility by making it harder for AI-driven systems to consistently access and interpret your content.

To sort these out, run a full crawl of your website with a reliable tool, identify errors, replace broken links, and simplify redirects. Wherever possible, link directly to the final destination instead of relying on chains.

3. Core Web Vitals Degradation

Core Web Vitals track real user experience and interaction through three key metrics. These are:

- Largest Contentful Paint (LCP): how quickly the main content loads

- Interaction to Next Paint (INP, which replaced First Input Delay): how responsive the page feels

- Cumulative Layout Shift (CLS): how stable the layout is

Google has set certain thresholds for each of these, which can help you identify which ones your website isn’t doing well with. For INP, for instance, a value under 200 milliseconds would be ‘good,’ between 200 and 500 ms ‘needs improvement,’ and above 500 ms is just ‘poor,’ based on Google’s guidelines.

Heavy media files, third-party scripts, and poorly managed layouts often reflect these bad stats. They ruin the user experience in the process, too: Pages feel slow, clicks lag, and layouts shift unexpectedly. These performance issues can also limit how reliably your content gets processed and mentioned in AI-generated results in real time.

Taking steps like compressing images, minifying JavaScript and CSS files, and delivering content more efficiently through things like CDNs can boost your CWV numbers. Regular audits at scale also keep performance in check.

4. Indexing Problems and “Invisible” Pages

Even when pages are crawlable, they may never make it into the index and thus never show up in SERPs.

Common causes include:

- Accidental noindex tags

- Incorrect or missing canonical tags

- Duplicate URLs competing with each other (aka cannibalization)

- Misconfigured robots.txt rules

There’s also the issue of index bloat, where low-value pages get indexed and dilute your overall site quality.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

When too many similar or low-quality pages exist, search engines struggle to decide what’s worth ranking. This can also create confusion for AI systems trying to identify which version of your content to pick up.

Fixing this means reviewing your directives carefully. Make sure important pages are indexable, duplicates are consolidated, and low-value pages are handled intentionally.

5. Duplicate Content and URL Variants

Duplicate content isn’t always obvious. A lot of it comes from how URLs are structured.

Different versions of the same page can exist due to:

- HTTP vs HTTPS

- Trailing slashes leading to inconsistency

- URL parameters that create infinite variants

- Pagination mistakes

- Faceted navigation

In some cases, these variations can create near-infinite combinations of URLs pointing to essentially the same content.

All these things split ranking signals and create internal competition on traditional search, and make it harder for AI-powered results to determine which version of your content is the most authoritative.

The goal here is consolidation. Use canonical tags to define the preferred version of a page, and make sure your metadata is unique where it needs to be.

For titles, think in terms of pixel width rather than character count. For Google, ~580 px to 600 px typically displays well in SERP snippets.

6. Slow Site Speed and Render-Blocking Resources

Performance issues go beyond Core Web Vitals. They often come down to how your site is built and delivered. And if it gets too bad, visitors will bounce.

Common culprits include:

- Render-blocking JavaScript and CSS

- Uncompressed assets

- Slow server response times

- Inefficient hosting setups

- Too many requests loading at once

These issues slow down how quickly and smoothly users can interact with your site, which directly affects both UX and rankings. They may also impact how efficiently AI models are able to access and process content from your site.

Minify your code to shrink file sizes, lazy-load non-critical assets so they don't block rendering, and implement caching at multiple levels. Also, over the last few years, performance thresholds have kept getting stricter, so you need to be constantly vigilant.

7. Sitemap Errors and Orphan Pages

Sitemaps are meant to guide search engines. When they’re inaccurate, they do the opposite.

Common issues include:

- Sitemaps listing broken or noindex URLs

- Missing or outdated sitemaps

- Bloated sitemap versions that overwhelm bots

- Important pages being excluded from sitemaps

- Conflicts with robots.txt

- Orphan pages (that exist but aren’t linked from anywhere internally, making them undiscoverable)

The lack of discoverability can prevent both traditional search engines and AI-driven systems from finding and including the most important pages of your website in their results and responses.

The best ways to deal with these problems are to keep your sitemap clean and aligned with your actual site structure, and make sure every important page is internally linked.

8. Poor Site Architecture and Internal Linking

Poor site architecture and URL structure make life hard for crawler bots and real users both.

You need to address problems like:

- Messy URLs that confuse everyone

- Deep hierarchies where pages sit more than, say, 3 clicks away from the homepage

- Illogical site structures that break natural flow

- Poor anchor text distribution that leaves some sections starved for link equity

These issues can typically be fixed with a flatter site structure, logical and sensible internal linking, clean and consistent URLs, and content siloing. This will also make it easier for AI systems to understand relationships between your pages and content. Most importantly, you need strong internal linking to distribute authority and help search engines better understand your site.

9. Hreflang and Canonical Conflicts

If your site targets multiple regions or languages, hreflang errors can cause serious confusion. Incorrect implementation can result in the wrong version of a page showing up for users in different locations. Such errors can also make it harder for AI-driven results to figure out which version of your content would be best to reference, especially when multiple variants send conflicting signals.

This usually happens when hreflang tags and canonical tags contradict each other or are incomplete.

To mitigate these risks, make sure every page has a self-referencing canonical and that hreflang tags are properly paired and consistent.

10. JavaScript Rendering and AI Crawler Barriers

Modern websites rely heavily on JavaScript, but that comes with trade-offs.

If key content isn’t available in the initial HTML, search engines may struggle to see it. That can lead to incomplete indexing or “thin” versions of pages appearing in search.

Common issues include:

- Content that only loads after user interaction

- Lazy-loaded elements that never get crawled

- Delays caused by client-side rendering

There’s also a newer layer to consider in recent months. Some sites accidentally block emerging AI crawlers like GPTBot or PerplexityBot, which can limit visibility in AI-generated answers. You need to optimize for answer engines.

The safest approach is to switch to server-side rendering for critical content and review your robots.txt settings carefully to allow legit bots through.

11. Missing or Incorrect Structured Data (Schema)

Invalid markup, wrong schema types, or mismatched data waste your shot at enhanced snippets. No schema implementation means you miss out on rich results entirely.

Schema plays an even bigger role in 2026 by increasing eligibility for rich results and helping AI-driven search systems interpret your content more accurately.

To set things right, avoid spammy or excessive markup and validate whatever you decide to keep. Also, use the right schema type and make sure it is consistent with the visible text on the page.

12. Accessibility and Mobile Usability Gaps

Mobile and accessibility gaps get overlooked in audits, but since Google relies on mobile-first indexing, you should worry about them.

Issues like tiny font sizes, poorly set tap targets, WCAG compliance failures, lack of responsiveness, and intrusive interstitials all destroy user-friendliness and rankings.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Some solutions you could try are:

- Add proper viewport meta tags to control scaling

- Implement ARIA labels for better screen reader support

- Ensure content consistency for desktop vs. mobile versions.

13. Security Issues

Not securing your site can harm users, trustworthiness, and, to a smaller extent, rankings.

Common issues include:

- Missing HTTPS

- Mixed content warnings in browsers

- Missing or misconfigured SSL certificates

- Lack of basic security headers

To mitigate these risks, enforce HTTPS sitewide using 301 redirects, hunt down and fix all mixed content, implement HTTP Strict Transport Security (HSTS) to lock in secure connections, and add basic security headers like Content Security Policy (CSP) or X-Frame-Options for extra protection.

Wrapping Up

Technical SEO issues tend to build up quietly. Individually, they might seem small and not so serious. But together, they can significantly affect how your site performs in search. That impact extends even beyond traditional rankings to how your content appears in AI-generated responses.

Before you start chasing content or backlinks, you need to get the foundation right. This also includes being regular and consistent, because technical SEO isn’t a one-time fix. Regular checks are part of the process, especially as your site grows and evolves. It takes effort to match the pace of updates like enhanced E-E-A-T requirements and tougher Core Web Vitals standards.

Manual reviews can catch some of these issues, but they’re neither sustainable nor scalable. Adopting a more systematic approach will help you catch problems earlier and fix them before they start affecting performance. That's why you need some reliable tools, possibly with sufficient automation, that make the process practical and thorough.