Intro

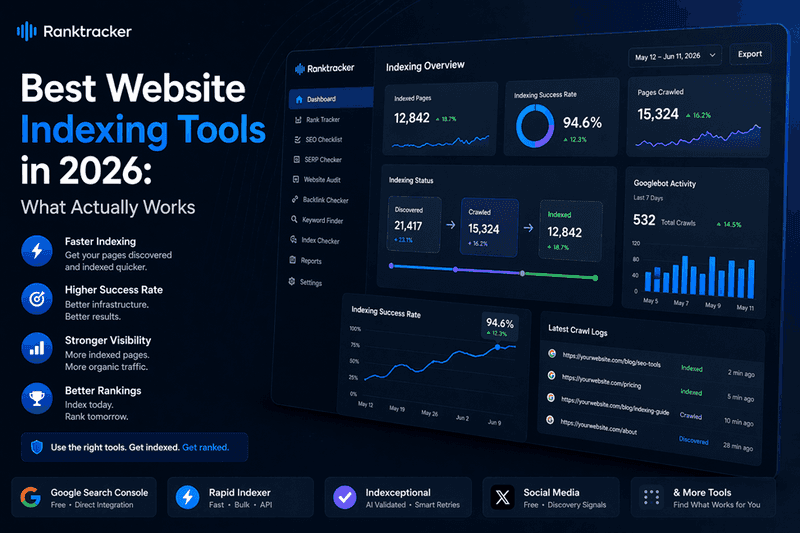

Publishing a page does not mean Google has found it.

The gap between a URL going live and Google adding it to the index is one of the most underestimated problems in SEO. For content-heavy sites, link builders, and agencies managing multiple campaigns, that gap directly translates to delayed rankings, wasted link equity, and slower returns on content investment.

Website indexing tools exist to close that gap - by creating crawl signals, building discovery pathways, and in some cases repeatedly resubmitting URLs until confirmed indexed.

This guide covers the tools worth using in 2026, based on testing across live campaigns, with notes on where each one fits into a practical workflow.

Why Indexing Delays Happen

Google allocates a crawl budget to every domain - a finite amount of resources Googlebot uses to discover and process pages. As the web grows, that budget gets spread thinner.

The practical result is two common GSC error states that most SEOs know well:

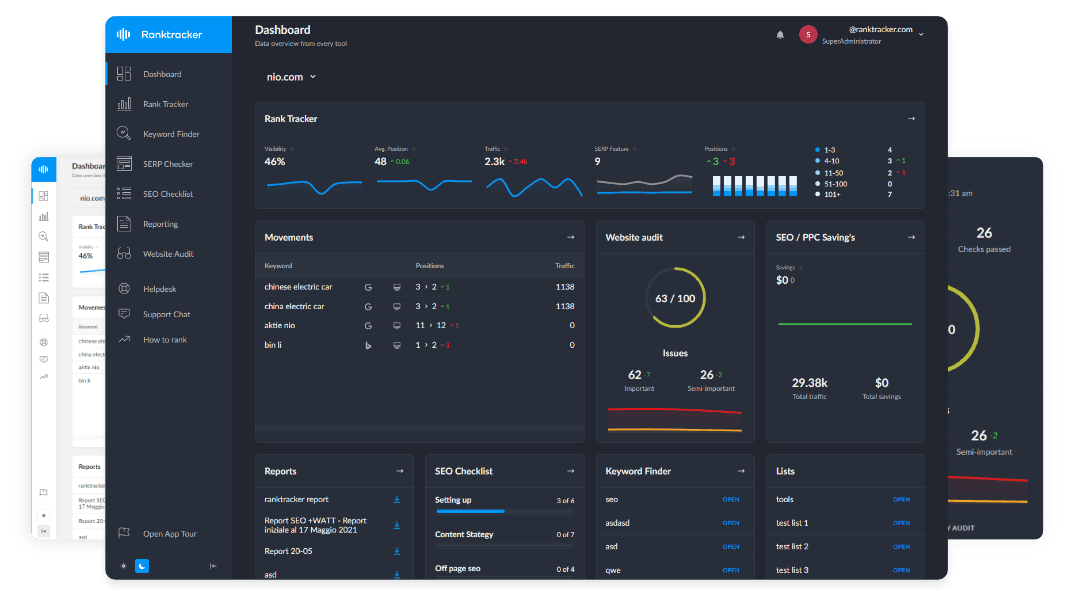

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

"Discovered - Currently Not Indexed" means Google found the URL (usually via sitemap) but has not yet sent Googlebot to crawl it. This is a queue problem - your page is waiting its turn.

"Crawled - Currently Not Indexed" means Googlebot visited the page but decided not to include it in the index. This is a quality or relevance problem - the tool did its job, but the content did not pass Google's threshold.

Indexing tools solve the first problem reliably. The second requires improving the underlying page. That distinction matters when choosing where to spend budget.

The Tools

1. Google Search Console

The free option built by Google itself - and the most white-hat approach available for pages you own and control directly. The URL Inspection tool lets you manually request crawling for any URL on a verified property. For fresh content, updated pages, or anything flagged in the Coverage report, a manual request is the cleanest zero-cost option available. There are three significant limitations worth knowing before relying on it. First, it is heavily rate limited - you can only submit a small number of URLs manually per day, making it impractical for bulk indexing work. Second, it only works for domains you have verified ownership of - for third-party backlink pages, guest posts, or any URL on another domain, it is not an option. Third, even when a request is submitted successfully, indexing can still take days rather than the minutes achieved by paid tools like Rapid Indexer. It is the cleanest method available, but not the fastest.

Best for: Owned pages, fresh content, GSC error resolution

Cost: Free

2. Rapid Indexer

The strongest paid option currently available for speed and infrastructure depth - and one of two tools on this list offering AI-validated submissions.

Rapid Indexer operates two submission tiers. The Standard Queue uses a drip-fed authority network to process bulk submissions within 24-48 hours - suited to citations, Tier 2/3 links, and large batches where gradual discovery looks natural. The VIP Queue is built for high-priority pages and achieved crawl discovery in under two minutes in our testing - the fastest result we recorded across all tools tested.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

The underlying mechanism combines cloud API signalling with high-authority feed injection, triggering real Googlebot visits that can be independently verified via crawl log monitoring tools. Before processing, URLs are validated for HTTP response status and crawl-readiness - so credits are not wasted on 404s or broken redirects. URLs that do not index on the first pass are automatically resubmitted using adaptive retry logic.

It also includes an AI traffic module that generates engagement signals (CTR, dwell time, scroll depth) via residential IPs - relevant for pages where user behaviour signals matter alongside pure indexation.

For agencies, it offers bulk processing up to 10,000 URLs, API access, Zapier/Make/n8n integration, a WordPress plugin, white-label CSV reporting, and auto-refunds on indexing failures.

Best for: Fast Tier 1 indexing, agency workflows, bulk campaigns

Pricing: $0.001/URL (checking), $0.02/URL (Standard), $0.10/URL (VIP)

3. Indexceptional

Founded by James Dooley and Leo Soulas in mid-2024, Indexceptional covers the same core feature set as Rapid Indexer - AI-powered URL validation, adaptive retry logic on failed submissions, and private crawl networks with intelligent ping scheduling for consistent long-term indexation.

The key difference is the credit refund policy. Any URL that does not get indexed has its credit returned automatically - meaning you pay only for confirmed results. That guarantee adds a layer of financial protection that makes it particularly well suited to larger batches where some indexing failures are expected.

The tradeoff is price - Indexceptional sits slightly higher per URL than Rapid Indexer, and does not include a traffic simulation module. For campaigns where the refund guarantee outweighs the cost premium, it is a strong option alongside or instead of Rapid Indexer.

Rated 4.8 stars across 300+ verified reviews and won a community SEO utility award in 2025.

Best for: AI-validated indexing with refund protection, agencies wanting guaranteed spend efficiency

Pricing: $29 (60 credits) to $499 (2,000 credits). 1 credit = 1 URL. Invalid and redirect URLs are not charged. Credits refunded for unindexed links.

4. Social Media Sharing

A free method worth knowing about - with important caveats on reliability and scale.

When a URL is shared publicly on Twitter/X, Reddit, LinkedIn, or similar high-crawl platforms, it creates a crawlable page that Googlebot may follow. On platforms with high crawl frequency like Reddit and Twitter/X, this can sometimes trigger discovery within hours of posting.

The key practical detail: share the backlink page URL itself, not just your money site. If a guest post or niche edit needs to be indexed, sharing that specific URL creates a discovery pathway pointing directly at the page that needs to be found.

The limitations are real though. Crawling from social shares is not guaranteed - Googlebot may or may not follow the link depending on platform crawl patterns at that moment. It also does not scale practically for large link batches, and the signal strength is inconsistent compared to paid tools.

Where it genuinely adds value is the downstream effect. Social shares drive real traffic to pages, and real traffic creates genuine engagement signals - time on page, return visits, click patterns - that can support rankings beyond the initial indexation event. For important pages, combining a paid indexer with social amplification gives both reliable discovery and ongoing ranking signals.

Best for: Supplementary discovery signals, driving genuine traffic to important pages, free crawl pathway creation

Cost: Free

Note: Not reliable as a standalone indexing method at scale

5. Backlink Indexing Tool

In operation since 2021, with one policy that stands out from the rest of the market: automatic credit refunds for any URL that does not get indexed. You pay only for confirmed results.

It runs parallel signals across Googlebot, Bingbot, and Yandex - broader search engine coverage than tools focused solely on Google. Average success rate in our testing sits at 89%, with most links indexed within 1-5 days and initial Googlebot crawl typically occurring within 1-6 hours of submission.

The refund policy removes financial risk from large bulk campaigns in a way no other tool currently matches.

Best for: Bulk backlink campaigns, risk-free spend on large batches

Pricing: $30 (100 credits), $135 (500 credits), $510 (2,000 credits)

6. Indx.it

A fast-turnaround indexing tool reporting discovery times of 1-5 minutes - comparable to Rapid Indexer's VIP Queue in speed terms.

Operates on a pay-as-you-go credit model with no subscription required. Pricing sits slightly above Rapid Indexer per URL. The dashboard is straightforward for both single submissions and recurring use.

A useful secondary option for Tier 1 links where speed is the priority and spreading submissions across more than one infrastructure is preferred.

Best for: Fast-turnaround Tier 1 submissions, infrastructure diversification

Pricing: Pay-as-you-go, credits do not expire

7. Giga Indexer

The most flexible option on this list for controlling submission pacing - drip-feed windows run from instant through to 30 days.

For large link batches where a gradual, natural-looking discovery pattern matters, this level of pacing control is genuinely useful. Success rate sits at 80% within 72 hours in our testing, with a 9-day refund guarantee on unindexed links.

Best for: Large batches requiring gradual submission, Tier 2/3 links

Pricing: $29 (60 credits), $99 (260 credits), $499 (2,000 credits)

What Indexing Tools Cannot Do

Worth stating clearly before spending money on any of the above.

An indexing tool gets Googlebot to visit a URL. It cannot control what Google decides once it arrives. If the page has thin content, duplicated text, or adds nothing new to the index, Google will crawl it and leave without indexing. The tool worked. The page did not pass.

A few factors that directly influence whether a crawled page gets indexed and retained:

Content quality and depth - Pages that cover a topic thoroughly, answer a genuine query, and bring something new to the index perform better than thin or closely paraphrased content, regardless of how many crawl signals are sent.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Page structure - A clear heading hierarchy (H1, H2, H3), logical flow, and proper internal linking help Googlebot understand and categorise a page. Well-structured pages are easier to retain in the index than poorly organised ones.

Technical health - Slow load times, blocked resources, broken elements, and conflicting canonical tags all give Googlebot reasons to deprioritise a page. Passing Core Web Vitals and keeping pages technically clean removes friction from the process.

Link signals - Pages with no internal links and no external discovery signals look low-priority. Building some link equity to a page before or alongside indexing submission improves the chance that a crawled page stays indexed rather than falling back out.

Indexing tools are most effective when the underlying page quality already meets Google's threshold. Getting Googlebot there faster does not change what it decides when it arrives.

Recommended Workflow

| Scenario | Recommended Approach |

| Your own pages | Google Search Console first - free and white-hat |

| Time-sensitive Tier 1 content | Rapid Indexer VIP Queue or Indx.it |

| AI-validated precision indexing | Indexceptional (credit refund on failures) |

| Third-party backlinks, bulk | Backlink Indexing Tool (auto-refund covers risk) |

| Large batches, gradual pacing | Giga Indexer drip-feed |

| Ranking signals + traffic boost | Social media sharing alongside paid indexing |

The most reliable approach combines free methods for owned content with a paid indexer for third-party pages - rather than defaulting to paid tools across the board. Social sharing works best as a complement to paid indexing on important pages, not as a replacement for it. For competitive campaigns, running a primary indexer alongside a verification step gives a more accurate picture of what has actually been indexed versus what is still queued.