Intro

Every year, AI models leap forward — GPT-4 to GPT-5, Gemini 1.5 to Gemini 2.0, Claude 3 to Claude 3.5 Opus, LLaMA to Mixtral. Each version promises to be “smarter,” “more capable,” “more aligned,” or “more accurate.”

But what does “smarter” actually mean?

Marketers, SEOs, and content strategists hear claims about:

-

bigger context windows

-

better reasoning

-

improved safety

-

stronger multimodality

-

higher benchmark scores

-

more reliable citations

Yet these surface-level improvements don’t explain the real mechanics of intelligence in large language models — the factors that determine whether your brand gets cited, how your content is interpreted, and why certain models outperform others in real-world use.

This guide breaks down the true drivers of LLM intelligence, from architecture and embeddings to retrieval systems, training data, and alignment — and explains what this means for modern SEO, AIO, and content discovery.

The Short Answer

One LLM becomes “smarter” than another when it:

-

Represents meaning more accurately

-

Reasons more effectively across steps

-

Understands context more deeply

-

Uses retrieval more intelligently

-

Grounds information with fewer hallucinations

-

Makes better decisions about which sources to trust

-

Learns from higher-quality data

-

Aligns with user intent more precisely

In other words:

Smarter models don’t just “predict better.” They understand the world more accurately.

Let’s break down the components that create this intelligence.

1. Scale: More Parameters, But Only If Used Correctly

For several years, “bigger = smarter” was the rule. More parameters → more knowledge → more capabilities.

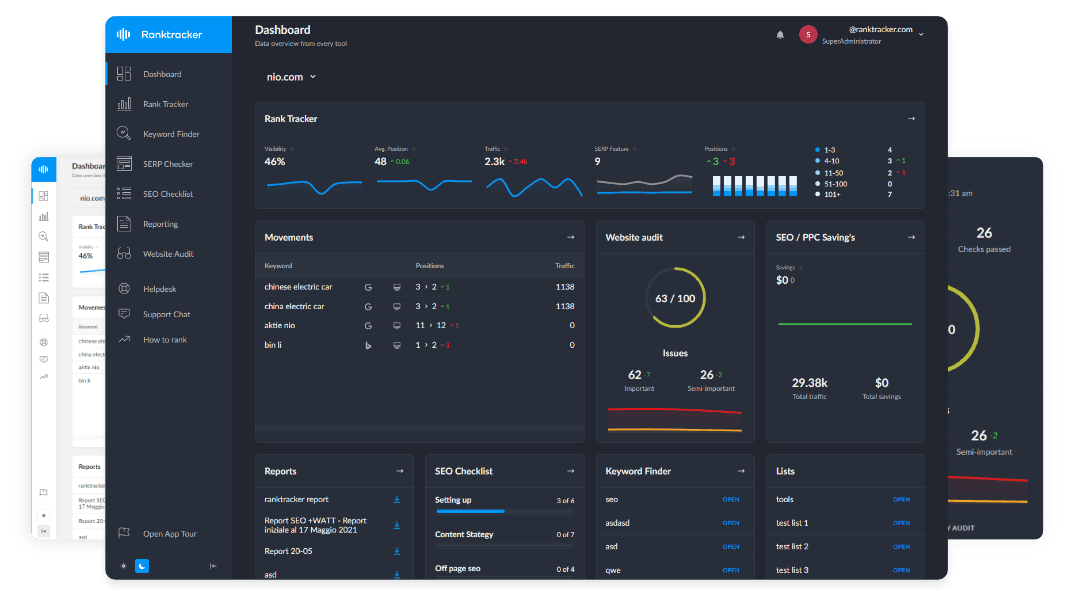

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

But in 2025, it’s more nuanced.

Why scale still matters:

-

more parameters = more representational capacity

-

richer embeddings

-

deeper semantic understanding

-

better handling of edge cases

-

more robust generalization

GPT-5, Gemini 2.0 Ultra, Claude 3.5 Opus — all frontier models — still rely on massive scale.

But raw scale alone is no longer the measure of intelligence.

Why?

Because an ultra-large model with weak data or poor training can be worse than a smaller but better-trained model.

Scale is the amplifier — not the intelligence itself.

2. Quality and Breadth of Training Data

Training data is the foundation of LLM cognition.

Models trained on:

-

high-quality curated datasets

-

well-structured documents

-

factual sources

-

domain authority content

-

well-written prose

-

code, math, scientific papers

…develop sharper embeddings and better reasoning.

Lower-quality data leads to:

-

hallucinations

-

bias

-

instability

-

weak entity recognition

-

factual confusion

This explains why:

-

Gemini leverages Google’s internal knowledge graph

-

GPT uses a mixture of licensed, public, and synthetic data

-

Claude emphasizes “constitutional” curation

-

Open-source models depend heavily on web crawls

Better data → better understanding → better citations → better output.

This also means:

your website becomes training data. Your clarity influences the next generation of models.

3. Embedding Quality: The Model’s “Understanding Space”

Smarter models have better embeddings — the mathematical representations of concepts and entities.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Stronger embeddings allow models to:

-

distinguish between similar concepts

-

resolve ambiguity

-

maintain consistent definitions

-

accurately map your brand

-

identify topical authority

-

retrieve relevant knowledge during generation

Embedding quality determines:

-

whether Ranktracker is recognized as your brand

-

whether “SERP Checker” is linked to your tool

-

whether “keyword difficulty” is associated with your content

-

whether LLMs cite you or your competitor

LLMs with superior embedding space are simply more intelligent.

4. Transformer Architecture Improvements

Every new LLM introduces architectural upgrades:

-

deeper attention layers

-

mixture-of-experts (MoE) routing

-

better long-context handling

-

improved parallelism

-

sparsity for efficiency

-

enhanced positional encoding

For example:

GPT-5 introduces dynamic routing and multi-expert reasoning. Gemini 2.0 uses ultra-long context transformers. Claude 3.5 uses constitutional layers for stability.

These upgrades allow models to:

-

track narratives across very long documents

-

reason through multi-step chains

-

combine modalities (text, vision, audio)

-

stay consistent across long outputs

-

reduce logical drift

Architecture = cognitive capability.

5. Reasoning Systems and Chain-of-Thought Quality

Reasoning (not writing) is the real intelligence test.

Smarter models can:

-

break down complex problems

-

follow multi-step logic

-

plan and execute actions

-

analyze contradictions

-

form hypotheses

-

explain thought processes

-

evaluate competing evidence

This is why GPT-5, Claude 3.5, and Gemini 2.0 score far higher in:

-

math

-

coding

-

logic

-

medical reasoning

-

legal analysis

-

data interpretation

-

research tasks

Better reasoning = higher real-world intelligence.

6. Retrieval: How Models Access Information They Don’t Know

The smartest models don’t rely on parameters alone.

They integrate retrieval systems:

-

search engines

-

internal knowledge bases

-

real-time documents

-

vector databases

-

tools and APIs

Retrieval makes an LLM “augmented.”

Examples:

Gemini: deeply embedded in Google Search ChatGPT Search: live, curated answer engine Perplexity: hybrid retrieval + multi-source synthesis Claude: document-grounded contextual retrieval

Models that retrieve accurately are perceived as “smarter” because they:

-

hallucinate less

-

cite better sources

-

use fresh information

-

understand user-specific context

Retrieval is one of the biggest differentiators in 2025.

7. Fine-Tuning, RLHF, and Alignment

Smarter models are more aligned with:

-

user expectations

-

platform safety policies

-

helpfulness objectives

-

correct reasoning patterns

-

industry compliance

Techniques include:

-

Supervised Fine-Tuning (SFT)

-

Reinforcement Learning from Human Feedback (RLHF)

-

Constitutional AI (Anthropic)

-

Multi-agent preference modeling

-

Self-training

Good alignment makes a model:

-

more reliable

-

more predictable

-

more honest

-

better at understanding intent

Poor alignment makes a model seem “dumb” even if its intelligence is high.

8. Multimodality and World Modeling

GPT-5 and Gemini 2.0 are multimodal from the core:

-

text

-

images

-

PDFs

-

audio

-

video

-

code

-

sensor data

Multimodal intelligence = world modeling.

Models begin to understand:

-

cause and effect

-

physical constraints

-

temporal logic

-

scenes and objects

-

diagrams and structure

This pushes LLMs toward agentic capability.

Smarter models understand not only language — but reality.

9. Context Window Size (But Only When Reasoning Supports It)

Bigger context windows (1M–10M tokens) allow models to:

-

read entire books

-

analyze websites end-to-end

-

compare documents

-

maintain narrative consistency

-

cite sources more responsibly

But without strong internal reasoning, long context becomes noise.

Smarter models use context windows intelligently — not just as a marketing metric.

10. Error Handling and Self-Correction

The smartest models can:

-

detect contradictions

-

identify logical fallacies

-

correct their own mistakes

-

re-evaluate answers during generation

-

request more information

-

refine their output mid-stream

This self-reflective capability is a major leap.

It separates “good” models from truly “intelligent” ones.

What This Means for SEOs, AIO & Generative Visibility

When LLMs become smarter, the rules of digital visibility shift dramatically.

Smarter models:

-

detect contradictory information more easily

-

penalize noisy or inconsistent brands

-

prefer canonical, well-structured content

-

cite fewer — but more reliable — sources

-

choose entities with stronger semantic signals

-

compress and abstract topics more aggressively

This means:

-

✔ Your content must be clearer

-

✔ Your facts must be more consistent

-

✔ Your entities must be stronger

-

✔ Your backlinks must be more authoritative

-

✔ Your clusters must be deeper

-

✔ Your structure must be machine-friendly

Smarter LLMs raise the bar for everyone — especially for brands relying on thin content or keyword-driven SEO.

Ranktracker’s ecosystem supports this shift:

-

SERP Checker → entity mapping

-

Web Audit → machine-readability

-

Backlink Checker → authority signals

-

Rank Tracker → impact monitoring

-

AI Article Writer → structured, canonical formatting

Because the smarter the AI becomes, the more your content needs to be optimized for AI understanding, not just human reading.

Final Thought: Intelligence in AI Is Not Just About Size — It’s About Understanding

A “smart” LLM is not defined by:

❌ parameter count

❌ training compute

❌ benchmark scores

❌ context length

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

❌ model hype

It is defined by:

-

✔ the quality of its internal representation of the world

-

✔ the fidelity of its embeddings

-

✔ the accuracy of its reasoning

-

✔ the clarity of its alignment

-

✔ the reliability of its retrieval

-

✔ the structure of its training data

-

✔ the stability of its interpretation patterns

Smarter AI forces brands to become smarter too.

There is no way around it — the next generation of discovery demands:

-

clarity

-

authority

-

consistency

-

factual precision

-

semantic strength

Because LLMs no longer “rank” content. They understand it.

And the brands that are understood best will dominate the AI-driven future.