Intro

In traditional SEO, the goal was simple:

rank on page 1.

In AI search, the goal is different:

Become a trusted data source inside Large Language Models.

If LLMs:

-

retrieve your content

-

cite your brand

-

embed your definitions

-

reinforce your entities

-

prefer your pages

-

use you during synthesis

—you win.

If they don’t? It doesn’t matter how good your Google rankings are. You are invisible in generative answers.

This article explains exactly how to ensure your site becomes a trusted source for LLMs — not through tricks, but through semantic clarity, entity stability, data cleanliness, and machine-readable authority.

1. What Makes an LLM Trust a Source? (The Real Criteria)

LLMs do not trust sites because of:

-

domain age

-

DA/DR

-

word count

-

keyword density

-

sheer volume of content

Instead, LLM trust emerges from:

-

✔ entity stability

-

✔ factual consistency

-

✔ cluster authority

-

✔ clean embeddings

-

✔ strong schema

-

✔ consensus alignment

-

✔ provenance

-

✔ recency

-

✔ cross-site corroboration

-

✔ high-confidence vectors

LLMs evaluate patterns, not metrics.

They prefer sources that consistently represent concepts in clear, stable, unambiguous ways.

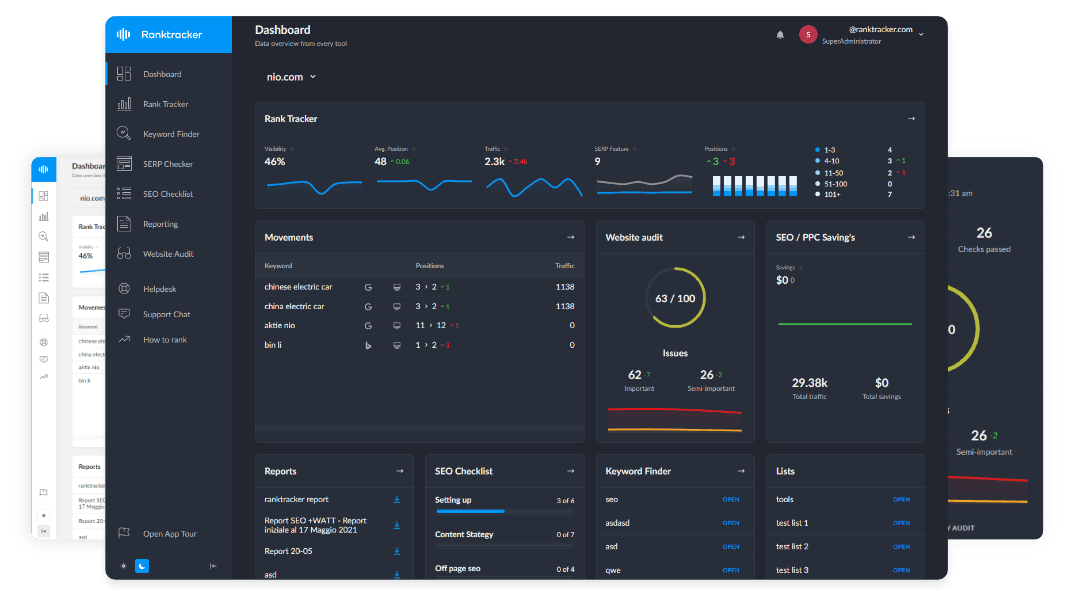

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

This is your job to engineer.

2. The LLM Trust Stack (How Models Decide Who to Cite)

LLMs follow a five-layer trust pipeline:

Layer 1 — Crawlability & Ingestion

Can the model reliably fetch, load, and parse your pages?

If not → you’re excluded immediately.

Layer 2 — Machine Readability

Can the model:

-

chunk

-

embed

-

parse

-

segment

-

understand

-

classify

your content?

If not → you will never be retrieved.

Layer 3 — Entity Clarity

Are your entities:

-

defined

-

consistent

-

stable

-

well-linked

-

schema-reinforced

-

corroborated externally?

If not → the model cannot trust your meaning.

Layer 4 — Content Reliability

Is your content:

-

factually consistent

-

internally aligned

-

externally corroborated

-

cleanly formatted

-

structurally logical

-

updated regularly?

If not → you’re too risky to cite.

Layer 5 — Generative Suitability

Does your content lend itself to:

-

summarization

-

extraction

-

embedding

-

synthesis

-

attribution?

If not → you get outranked by cleaner, clearer sources.

This trust stack determines which sites LLMs choose — every time.

3. How LLMs Judge Trust (Deep Technical Explanation)

Trust isn’t a single number.

It emerges from multiple subsystems.

1. Embedding Confidence

LLMs trust chunks that embed cleanly.

Clean vectors have:

-

clear topic focus

-

consistent entity references

-

minimal ambiguity

-

stable definitions

Noisy vectors = low trust.

2. Knowledge Graph Alignment

Models check:

-

does this page match known entities?

-

does it contradict core facts?

-

does it map to external sources?

Good alignment = higher trust.

3. Consensus Detection

LLMs compare your content to:

-

Wikipedia

-

major news outlets

-

authoritative industry sites

-

government data

-

high-E-E-A-T sources

If your content reinforces consensus → trust rises. If it contradicts consensus → trust drops.

4. Recency Matching

Fresh, updated content gets:

-

higher temporal trust

-

stronger retrieval weight

-

better generative priority

Stale content is considered unsafe.

5. Provenance Signals

Models evaluate:

-

authorship

-

organization

-

external mentions

-

schema

-

structured identity

Canonical identity = canonical trust.

4. The Framework: How to Become a Trusted LLM Source

Here is the complete system.

Step 1 — Stabilize Your Entities (The Foundation)

Everything begins with entity clarity.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Do this:

-

✔ Use consistent names

-

✔ Create canonical definitions

-

✔ Build strong clusters

-

✔ Reinforce meanings in multiple pages

-

✔ Add Organization, Product, Article, and Person schema

-

✔ Use the same descriptions everywhere

-

✔ Avoid synonym drift

Stable entities → stable embeddings → stable trust.

Step 2 — Build Machine-Readable Content Structures

LLMs must be able to parse your pages.

Focus on:

-

clean H2/H3 hierarchy

-

short paragraphs

-

one concept per section

-

definition-first writing

-

semantic lists

-

structured summaries

-

avoid long blocks or mixed topics

Machine readability drives:

-

cleaner embeddings

-

better retrieval

-

higher generative eligibility

Step 3 — Add JSON-LD to Define Meaning Explicitly

JSON-LD reinforces:

-

identity

-

authorship

-

topic

-

product definitions

-

entity relationships

This reduces ambiguity dramatically.

Use:

-

Article

-

Person

-

Organization

-

FAQPage

-

Product

-

Breadcrumb

Schema = LLM trust scaffolding.

Step 4 — Maintain Data Cleanliness Across Your Site

Dirty data weakens trust:

-

conflicting definitions

-

outdated facts

-

inconsistent terminology

-

duplicate content

-

redundant pages

-

mismatched metadata

Clean data = stable LLM understanding.

Step 5 — Ensure Content Freshness & Recency

LLMs heavily weight recency for:

-

tech

-

SEO

-

finance

-

cybersecurity

-

reviews

-

statistics

-

legal topics

-

medical information

Use:

-

updated timestamps

-

JSON-LD dateModified

-

meaningful updates

-

cluster-wide freshness

Fresh = trustworthy.

Step 6 — Build Strong Internal Linking for Semantic Integrity

Internal linking shows AI models:

-

conceptual relationships

-

topic clusters

-

page hierarchy

-

supporting evidence

LLMs use these signals to create internal knowledge maps.

Step 7 — Create Extraction-Friendly Blocks

AI search engines need material they can:

-

quote

-

summarize

-

chunk

-

embed

-

cite

Use:

-

definitions

-

Q&A sections

-

step-by-step processes

-

lists

-

key takeaways

-

comparison tables (sparingly)

Extraction-friendly content = citation-friendly content.

Step 8 — Align Your Content With External Consensus

LLMs cross-check your information with:

-

high-authority sites

-

public data

-

Wikipedia

-

industry references

If you contradict consensus, your trust collapses unless:

-

your brand is authoritative enough

-

your content is well-cited

-

your evidence is strong

Don’t fight consensus unless you can win.

Step 9 — Strengthen Off-Site Entity Reinforcement

External sources should confirm:

-

your brand name

-

your descriptions

-

your product list

-

your features

-

your positioning

-

your founder identity

LLMs read the entire internet. You must be consistent everywhere.

Step 10 — Avoid Patterns That Decrease LLM Trust

These are the biggest red flags:

-

❌ keyword-stuffed content

-

❌ long, unfocused paragraphs

-

❌ AI-written fluff with no substance

-

❌ inconsistent schema

-

❌ ghost authors

-

❌ factual contradictions

-

❌ generic definitions

-

❌ domain-wide duplication

-

❌ unstructured pages

LLMs deprioritize sites that produce noise.

5. How Ranktracker Tools Help Build LLM Trust (Non-Promotional Mapping)

This section maps tools functionally — without sales tone.

Web Audit → Detects LLM Accessibility Issues

Including:

-

missing schema

-

bad structure

-

duplicate content

-

broken internal linking

-

slow pages blocking AI crawlers

Keyword Finder → Finds LLM-Intent Topics

Helps identify question-first formats that convert well into embeddings.

SERP Checker → Reveals Answer Patterns

Shows extraction styles Google prefers — which LLMs often mimic.

Backlink Checker / Monitor → Reinforces Entity Authority

External mentions strengthen consensus signals.

6. How You Know You’ve Become a Trusted LLM Source

These signals indicate success:

-

✔ ChatGPT begins citing your site

-

✔ Perplexity uses your definitions

-

✔ Google AI Overviews pulls your lists

-

✔ Gemini uses your examples

-

✔ your brand appears in generative comparisons

-

✔ AI models no longer hallucinate about you

-

✔ your product descriptions appear verbatim in summaries

-

✔ your canonical definitions spread across AI outputs

When this happens, you’re no longer competing in SERPs. You’re competing in the model’s memory itself.

Final Thought:

You Don’t Win AI Search by Ranking — You Win by Becoming a Source

Google ranked pages. LLMs cite knowledge.

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Google measured relevance. LLMs measure meaning.

Google rewarded backlinks. LLMs reward clarity and consistency.

Being a trusted LLM source is now the highest form of visibility. It requires:

-

clear entities

-

clean data

-

strong schema

-

machine-readable structure

-

stable definitions

-

consistent metadata

-

cluster authority

-

consensus alignment

-

meaningful freshness

Do these things right, and LLMs don’t just read your content — they integrate it into their understanding of the world.

That is the new frontier of search.