Intro

In human writing, repetition is often avoided. In AI writing, repetition is a feature — not a flaw.

Large Language Models (LLMs) learn, interpret, and retrieve information through:

-

pattern recognition

-

entity stability

-

semantic consistency

-

embedding clarity

-

textual regularity

If your writing style is inconsistent, or if your entity names vary, LLMs lose confidence in your meaning.

This leads to:

-

semantic drift

-

incorrect citations

-

lost entity recognition

-

lower retrieval ranking

-

inconsistent AI summaries

-

hallucinated attributes

-

exclusion from AI Overviews

-

misclassification in knowledge graphs

This guide explains why style consistency and entity repetition are not optional — they are fundamental to LLM visibility.

1. Why LLMs Depend on Consistent Signals

Unlike search engines, LLMs do not index content through URLs and PageRank. They rely on:

-

✔ embeddings

-

✔ patterns

-

✔ repeated structures

-

✔ entity stability

-

✔ contextual similarity

-

✔ cross-source validation

LLMs aggregate meaning across thousands of text fragments. If your signals aren’t consistent, models can’t:

-

cluster your content together

-

consolidate your brand identity

-

recognize your expertise

-

connect your entities

-

interpret your writing style

Consistency = understandability. Understandability = trust. Trust = retrieval.

2. Entity Repetition: Why It’s Essential for LLM Interpretation

Entities — people, companies, products, concepts — must be handled with strict repetition.

Example:

Correct (repeated consistently): Ranktracker Ranktracker Ranktracker

Incorrect (semantic drift): Rank Tracker RankTracker RT The Rank Tracker tool Your rank tool

To an LLM, these are different strings, and therefore:

-

different embeddings

-

different entities

-

different meanings

LLMs do not auto-normalize entity names unless you have massive global prominence — which most brands, niches, or products do not.

Consistency is the only solution.

3. How LLMs Encode Entities (Technical Breakdown)

When an LLM sees an entity, it creates an embedding for that string. The embedding includes:

-

relationships

-

attributes

-

associations

-

surrounding context

-

factual reinforcement

-

source patterns

If you use multiple variations:

-

embeddings scatter

-

context fragments

-

attributes split

-

meaning becomes noisy

-

retrieval becomes unreliable

This is called entity fragmentation.

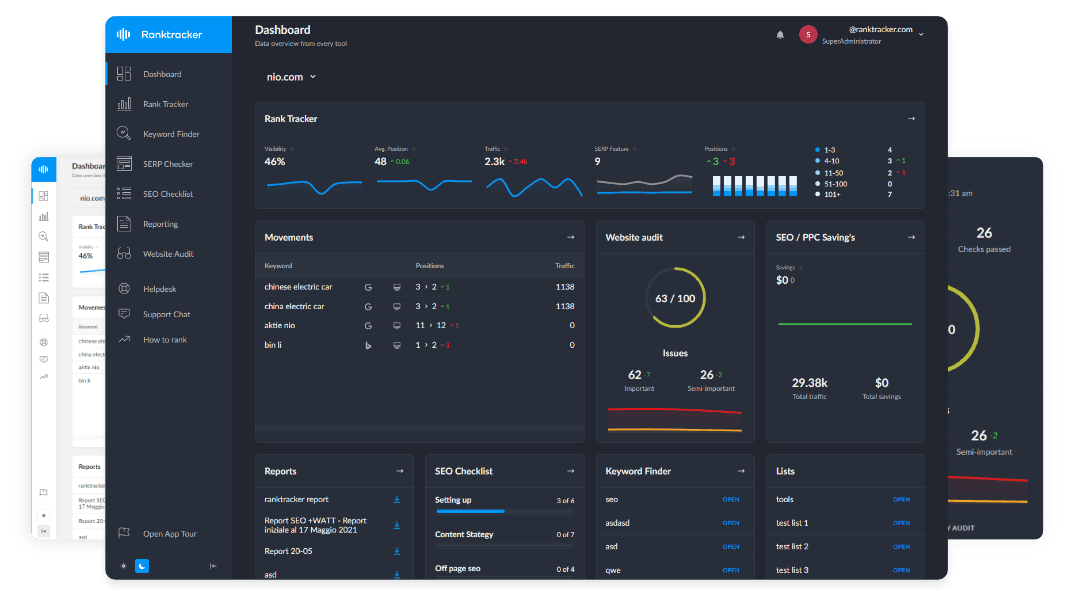

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

The opposite — consistent usage — produces entity consolidation.

Entity consolidation improves:

-

✔ retrieval ranking

-

✔ citation frequency

-

✔ knowledge graph stability

-

✔ reduced hallucination

-

✔ brand consistency across model outputs

4. Style Consistency: The Hidden LLM Optimization Layer

LLMs expect text to follow predictable patterns. If your style fluctuates wildly across pages or even within a single article, the model has trouble:

-

segmenting meaning

-

associating content with your brand

-

connecting clusters

-

identifying authorship style

-

reinforcing your authority

Style consistency creates a stable “signature” in the model.

LLMs learn:

-

your tone

-

your formatting habits

-

your preferred structure

-

your typical paragraph length

-

how you introduce definitions

-

how you present facts

-

how you reference entities

Consistency builds a semantic fingerprint.

When your fingerprint is stable, models are more likely to:

-

trust your content

-

retrieve it

-

classify it

-

cite it

-

reuse it in generative answers

5. What Happens When Entities or Style Drift? (The Damage)

Inconsistency causes:

1. Semantic Drift

The model misinterprets your entity or topic over time.

2. Embedding Noise

Variations create additional, lower-confidence embeddings.

3. Lost Entity Recognition

The model stops linking pages to the same concept.

4. Lower Retrieval Probability

Noisy signals mean weaker vector matches.

5. Confused Knowledge Graph Placement

Inconsistent entity naming breaks graph alignment.

6. Hallucinated Attributes

The model “guesses” missing meaning with inaccuracies.

7. Lost Visibility in AI Search

Your content won’t appear in summaries or answers.

Style inconsistency weakens your brand's presence across the entire AI ecosystem.

6. The Rule of Repetition: How Much Is Enough?

LLMs need enough repetition to classify meaning with confidence.

Here is the ideal repetition pattern:

1. Entity repeated in the title

Ensures the page-level embedding is anchored.

2. Entity repeated in the intro (1–2 times)

Signals importance early.

3. Entity repeated at every definitional section

Stabilizes contextual meaning.

4. Entity repeated in examples and explanations

Reinforces real-world association.

5. Entity repeated in the conclusion

Strengthens the final summary embedding.

BUT—repetition must be natural.

Avoid stuffing. Focus on clarity.

7. Style Consistency: The 10-Point Checklist

To maintain LLM-friendly stylistic consistency, all articles should follow:

-

✔ definition-first writing

-

✔ clean H2/H3 hierarchy

-

✔ answerable paragraphs

-

✔ 2–4 sentence blocks

-

✔ consistent tone

-

✔ literal sentence openings

-

✔ machine-readable transitions

-

✔ consistent formatting for lists

-

✔ stable terminology

-

✔ uniform levels of detail

This structure becomes part of your brand identity inside the model.

8. How to Maintain Entity Stability Across Your Site

Follow these principles:

1. Use one canonical name for each entity

“Ranktracker” → never “Rank Tracker.”

2. Build a canonical entity dictionary

A simple sheet with:

-

Entity

-

Allowed terms

-

Forbidden variants

-

Schema definitions

-

Associated pages

3. Add JSON-LD for all key entities

LLMs use schema as grounding data.

4. Reinforce entities in clusters

All related articles must use:

-

the same name

-

the same definition

-

the same position

-

the same attributes

5. Avoid synonyms for entities

Synonyms break the embedding.

6. Use consistent anchor text for internal links

LLMs use link anchor patterns to infer entity identity.

9. The Ideal Writing Style for LLM Interpretation

The ideal style is:

-

✔ literal

-

✔ precise

-

✔ structured

-

✔ semantically clean

-

✔ definition-first

-

✔ repetitive (in controlled ways)

-

✔ consistent across all content

But still:

-

✔ human

-

✔ intentional

-

✔ expert-driven

-

✔ narrative where appropriate

This hybrid style is the “sweet spot” for LLM readability and brand preservation.

10. How Ranktracker Tools Support Style & Entity Consistency (Functional Mapping)

Web Audit

Flags:

-

inconsistent headings

-

duplicate content

-

missing schema

-

URL inconsistencies

-

crawl issues affecting embeddings

—

AI Article Writer

Produces LLM-friendly structure you can personalize.

Backlink Monitor

Validates off-site mentions — ensuring external entity consistency.

SERP Checker

Shows how Google recognizes your entity patterns.

Final Thought:

Consistency Isn’t Cosmetic — It’s Core to LLM Visibility

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

Style consistency and entity repetition are not “nice-to-haves.” They determine:

-

how LLMs interpret your brand

-

how they summarize your content

-

how they classify your entities

-

how they retrieve your pages

-

how they cite you

-

how they represent you across the AI ecosystem

In the age of LLM search, you are not optimizing for keywords — you’re optimizing for meaning stability.

Stable meaning → stable embeddings → stable trust → stable visibility.

Control your style. Control your entities. Control your presence inside the model.

That is how brands win the generative search era.