Intro

Developers and engineering teams choosing an AI model for their products care about more than marketing copy and reasoning quality. They care about technical performance, API flexibility, cost, context handling, and how the model fits into complex software stacks.

Claude and Mistral are two models frequently discussed in this context in 2026 — one representing a commercially managed, deep reasoning model, and the other a flexible, efficient open-model alternative. Below is a detailed comparison for a developer and API audience.

Overview of Both Models

What Is Claude?

Claude is a large language model developed by Anthropic that emphasizes reasoning, safety, and structured output. It is marketed toward enterprise, complex workflows, and professional use cases where consistency matters. Deployment is available via a managed API that abstracts infrastructure and security, and Anthropic generally pushes strong contextual and alignment guarantees. (Epista)

What Is Mistral?

Mistral is developed by Mistral AI and represents a lighter, cost-efficient series of models that are open to broad usage — including open weights for some variants. The Mistral family includes lightweight, balanced, and large MoE-style models designed for developers who want flexible deployment, cost control, and performance at scale. (AIonX)

Core Differences: Architecture & Philosophy

Commercial vs. Open-Oriented Design

Claude

- Closed-source, proprietary model delivered through Anthropic’s managed APIs.

- Emphasis on safety, alignment, and structured reasoning.

- Designed to be “plug-and-play” for enterprise use.

- Strong support for long, complex interactions and high-value reasoning tasks. (Epista)

Mistral

- More open ecosystem with a range of models from lightweight to large.

- Appealing for developers who want self-hosted, customizable deployment or experimentation.

- Often seen as offering flexible token pricing and efficient performance. (AIonX)

For teams prioritizing deep reasoning with minimal engineering overhead, Claude’s managed model is compelling. For teams needing open access and control over deployment, Mistral’s range shines.

API & Integration Considerations

Ease of Use

Claude API

- Anthropic manages the model hosting, scaling, and maintenance.

- Works well for teams that want stable integration with robust uptime and performance.

- Beneficial compliance and safety defaults because the API is managed. (Epista)

Mistral API / Self-Hosting

- Provides APIs but also allows deployment via self-hosted or third-party services.

- Offers greater flexibility if you want to run the model on your own infrastructure, edge clusters, or hybrid cloud setup.

- Developers can experiment with different Mistral variants based on performance needs. (AIonX)

Mistral’s flexibility is attractive for custom infrastructure and scaling, while Claude’s managed API simplifies dev operations and stability.

Context Windows and Scaling

Claude

Claude’s flagship models (e.g., Opus) are designed to handle very large context windows, often significantly more than many other models. An example metric shows Claude Sonnet having up to ~200,000 tokens of context — well above most open alternatives. (LLM Stats)

Larger contexts help with:

- Document summarization

- Multi-document reasoning

- Complex codebase analysis

Mistral

Mistral’s flagship models (e.g., Mistral Large 2 and variants) also support extended context (e.g., ~128,000 tokens), though typically less than Claude’s largest models. (LLM Stats)

Mistral’s trade-offs include:

- Slightly smaller token context limits

- Faster throughput and lower per-token cost

Developers should choose based on whether the application is depth-intensive or speed-/volume-intensive.

Performance and Output Quality

Claude

Claude is often reported to deliver more nuanced reasoning and coherent result structures that shine in research-intensive tasks, structured writing, and complex creative content. That makes it strong for internal tools where output quality and logical follow-through matter. (Epista)

It’s expected to perform well for:

- Document summarization

- Complex knowledge work

- Long-form content generation

Mistral

Benchmarks and community reports suggest that Mistral models can be competitive in many tasks while offering improved cost efficiency and lighter infrastructure needs. Some variants are rated at ~90% or more of more expensive models while being cheaper to run. (AIonX)

Anecdotally, developers note that Mistral may outperform other models on specific structured tasks like converting raw data into typed structures (e.g., transforming JSON into TypeScript), indicating practical utility for developer workflows. (Reddit)

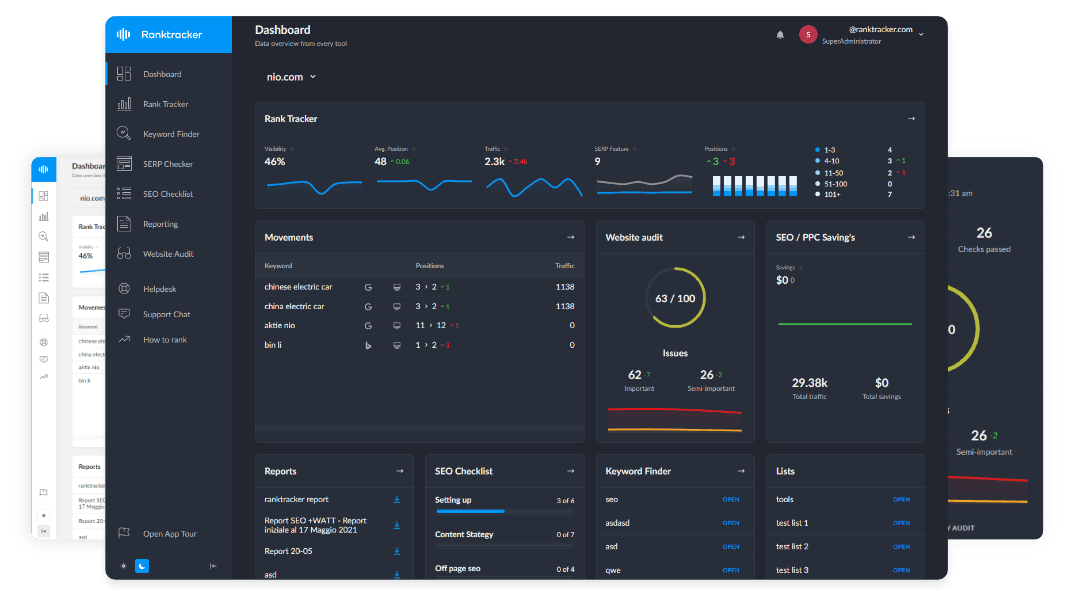

The All-in-One Platform for Effective SEO

Behind every successful business is a strong SEO campaign. But with countless optimization tools and techniques out there to choose from, it can be hard to know where to start. Well, fear no more, cause I've got just the thing to help. Presenting the Ranktracker all-in-one platform for effective SEO

We have finally opened registration to Ranktracker absolutely free!

Create a free accountOr Sign in using your credentials

For code-centric tasks or structured analysis where absolute narrative quality is secondary to technical correctness, Mistral variants may be preferable.

Pricing & Cost Efficiency

Claude

Managed API pricing tends to be higher due to its enterprise-ready stack and safety/ compliance investments. For example, larger Claude variants with long context windows carry correspondingly higher input and output pricing. (LangDB AI Gateway)

Pros:

- Predictable, supported pricing

- Less engineering overhead

- Compliance features included

Cons:

- Higher per-token cost

- Less control over infrastructure

Mistral

Mistral’s pricing strategy — especially on open or self-hosted deployments — tends to offer lower token costs and a flexible open-model network. For teams with high volume needs or those building on budget, this can be a major advantage. (LangDB AI Gateway)

Pros:

- Lower cost per token

- Flexibility in deployment

- Scales horizontally with custom infra

Cons:

- Requires homegrown infrastructure or third-party services

- Fewer built-in safety layers (depending on deployment)

Best Use Cases

Claude

Choose Claude if you need:

- High-quality reasoning and deep context

- Managed API with enterprise support

- Complex applications involving research, legal text, or documentation

- Consistent outputs with strong alignment guarantees

Mistral

Choose Mistral if you need:

- Cost-efficient, scalable AI

- Open-model flexibility and customization

- Self-hosted or hybrid deployment scenarios

- Developer workflows prioritizing speed over deep narrative nuance

SEO and Developer Workflow Implications

AI models are not SEO tools in themselves. The difference lies in how well they integrate into structured content workflows that include validation and measurement.

A professional developer or content workflow in 2026 should include:

- Generate content or responses using Claude or Mistral

- Validate keyword opportunities and search intent via Ranktracker

- Analyze SERP competitors and content gaps

- Publish optimized content

- Track Top 100 rankings daily to measure performance and iterate

AI accelerates drafting, code scaffolding, and analysis — but SEO tools confirm whether the output succeeds competitively.

Final Verdict: Claude vs Mistral for Developers

Claude and Mistral are both strong AI models for developers in 2026 — but they serve distinct needs:

- Claude excels in deep reasoning, enterprise grade API access, and structured outputs for complex tasks.

- Mistral excels in cost efficiency, flexible deployment, and practical developer workflows where performance and scaling matter.

Your choice depends on priorities:

- For complex logic, reasoning depth, and enterprise support, Claude is often worth the cost.

- For flexible, scale-driven, low-cost AI builds, Mistral’s open model ecosystem is highly compelling.

Both can coexist depending on workload: use Claude where quality and depth matter most, and Mistral where speed, scale, and cost are the priority.